Randomized controlled trial

Editor-In-Chief: C. Michael Gibson, M.S., M.D. [1] __TOCLEFT__

Overview

A randomized controlled trial (RCT) is a scientific procedure most commonly used in testing medicines or medical procedures. RCTs are considered the most reliable form of scientific evidence because it eliminates all forms of spurious causality. RCTs are mainly used in clinical studies, but are also employed in other sectors such as judicial, educational, social research. Clinical RCTs involve allocating treatments to subjects at random. This ensures that the different treatment groups are 'statistically equivalent'.

Sellers of medicines throughout the ages have had to convince their consumers that the medicine works. As science has progressed, public expectations have risen, and government health budgets have become ever tighter, pressure has grown for a reliable system to do this. Moreover, the public's concern for the dangers of medical interventions has spurred both legislators and administrators to provide an evidential basis for licensing or paying for new procedures and medications. In most modern health-care systems all new medicines and surgical procedures therefore have to undergo trials before being approved.

Trials are used to establish average efficacy of a treatment as well as learn about its most frequently occurring side-effects. This is meant to address the following concerns. First, effects of a treatment may be small and therefore undetectable except when studied systematically on a large population. Second, biological organisms (including humans) are complex, and do not react to the same stimulus in the same way, which makes inference from single clinical reports very unreliable and generally unacceptable as scientific evidence. Third, some conditions will spontaneously go into remission, with many extant reports of miraculous cures for no discernible reason. Finally, it is well-known and has been proven that the simple process of administering the treatment may have direct psychological effects on the patient, sometimes very powerful, what is known as the placebo effect.

Efficacy is how well a treatment performs under ideal circumstances (and is studied in a clinical trial) whereas effectiveness is performance in the real world[1][2].

Types of trials

Randomized trials are employed to test efficacy while avoiding these factors. Trials may be open, blind or double-blind.

Open trial

In an open trial, the researcher knows the full details of the treatment, and so does the patient. These trials are open to challenge for bias, and they do nothing to reduce the placebo effect. However, sometimes they are unavoidable, particularly in relation to surgical techniques, where it may not be possible or ethical to hide from the patient which treatment he or she received. Usually this kind of study design is used in bioequivalence studies.

Blind trials

Single-blind trial

In a single-blind trial, the researcher knows the details of the treatment but the patient does not. Because the patient does not know which treatment is being administered (the new treatment or another treatment) there might be no placebo effect. In practice, since the researcher knows, it is possible for them to treat the patient differently or to subconsciously hint to the patient important treatment-related details, thus influencing the outcome of the study.

Double-blind trial

In a double-blind trial, one researcher allocates a series of numbers to 'new treatment' or 'old treatment'. The second researcher is told the numbers, but not what they have been allocated to. Since the second researcher does not know, they cannot possibly tell the patient, directly or otherwise, and cannot give in to patient pressure to give them the new treatment. In this system, there is also often a more realistic distribution of sexes and ages of patients. Therefore double-blind (or randomized) trials are preferred, as they tend to give the most accurate results.

Triple-blind trial

Some randomized controlled trials are considered triple-blinded, although the meaning of this may vary according to the exact study design. The most common meaning is that the subject, researcher and person administering the treatment (often a pharmacist) are blinded to what is being given. Alternately, it may mean that the patient, researcher and statistician are blinded. These additional precautions are often in place with the more commonly accepted term "double blind trials", and thus the term "triple-blinded" is infrequently used. However, it connotes an additional layer of security to prevent undue influence of study results by anyone directly involved with the study.

n-of-1

"N-of-1 or single subject clinical trials consider an individual patient as the sole unit of observation in a study investigating the efficacy or side-effect profiles of different interventions"[3].

In this design, the outcome may be reported with the nocebo ratio ("the ratio of symptom intensity induced by taking placebo to the symptom intensity induced by taking a statin")[4].

The SPENT checklist guides reporting of n-of-1 trials (https://www.equator-network.org/reporting-guidelines/spirit-extension-n-of-1-trials-spent-2019/)[5].

An international consortium has been established to help n-of-1 trials[6].

Aspects of control in clinical trials

Traditionally the control in randomized controlled trials refers to studying a group of treated patients not in isolation but in comparison to other groups of patients, the control groups, who by not receiving the treatment under study give investigators important clues to the effectiveness of the treatment, its side effects, and the parameters that modify these effects.

Should control groups use placebo or active controls

Placebo controls are important, because the placebo effect can often be strong. The more financial cost a subject believes an unknown drug has, the more placebo effect is has.[7] The use of historical rather than concurrent controls may lead to exaggerated estimation of effect.[8]

Comparing a new intervention to a placebo control may not be ethical when an accepted, effective treatment exists. In this case, the new intervention should be compared to the active control to establish whether the standard of care should change.[9] The observation that industry sponsored research may be more likely to conduct trials that have positive results suggest that industry is not picking the most appropriate comparison group.[10] However, it is possible that industry is better at predicting which new innovations are likely to be successful and discontinuing research for less promising interventions before the trial stage.

There are times when placebo control is appropriate even when there is accepted, effective treatment.[11][12][13]

The placebo effect can be seen in controlled trials of surgical interventions with the control group receiving a sham procedure.[14][15][16] Guidelines by the American Medical Association address the use of placebo surgery.[17]

Control group optimization

Optimization of the delivery of health care in the control groups is important. This was illustrated in a randomized control trial of extracorporeal membrane oxygenation (ECMO). The ECO showed no improvement in survival although the survival of patients treated with ECMO was similar to prior published results.[18] However, the authors had protocolized the management of the mechanical ventilation in the control group and found high adherence to the protocols and higher than expected survival in the control group. In a subsequent randomized controlled trial, the authors found that protocolized management of mechanical ventilation was better than usual care management of mechanical ventilation.[19]

Another example is the use of procalcitonin to guide antibiotic therapy in community-acquired pneumonia.

- In the initial studies such as the ProHOSP trial[20], showing benefit from the use of procalcitonin to guide antibiotics, the control groups were not optimized and did not follow the existing clinical practice guideline by the IDS[21].

- In the subsequent ProACT trial, the control group followed the IDSA guidelines, and in this trial the use of procalcitonin did not reduce the usage of antibiotics[22].

Types of control groups

- Placebo concurrent control group

- Dose-response concurrent control group

- Active concurrent control group

- No treatment concurrent control group

- Historical control

Randomization in clinical trials

There are two processes involved in randomizing patients to different interventions. First is choosing a randomization procedure to generate a random and unpredictable sequence of allocations. This may be a simple random assignment of patients to any of the groups at equal probabilities, or may be complex and adaptive. A second and more practical issue is allocation concealment, which refers to the stringent precautions taken to ensure that the group assignment of patients are not revealed to the study investigators prior to definitively allocating them to their respective groups.

Randomization procedures

There are a couple of statistical issues to consider in generating the randomization sequences[23].:

- Balance: since most statistical tests are most powerful when the groups being compared have equal sizes, it is desirable for the randomization procedure to generate similarly-sized groups.

- Selection bias: depending on the amount of structure in the randomization procedure, investigators may be able to infer the next group assignment by guessing which of the groups has been assigned the least up to that point. This breaks allocation concealment (see below) and can lead to bias in the selection of patients for enrollment in the study.

- Accidental bias: if important covariates that are related to the outcome are ignored in the statistical analysis, estimates arising from that analysis may be biased. The potential magnitude of that bias, if any, will depend on the randomization procedure.

Complete randomization

In this commonly used and intuitive procedure, each patient is effectively randomly assigned to any one of the groups. It is simple and optimal in the sense of robustness against both selection and accidental biases. However, its main drawback is the possibility of imbalances between the groups. In practice, imbalance is only a concern for small sample sizes (n < 200).

Permuted block randomization

In this form of restricted randomization, blocks of k patients are created such that balance is enforced within each block. For instance, let E stand for experimental group and C for control group, then a block of k = 4 patients may be assigned to one of EECC, ECEC, ECCE, CEEC, CECE, and CCEE, with equal probabilities of 1/6 each. Note that there are equal numbers of patients assigned to the experiment and the control group in each block.

Permuted block randomization has several advantages. In addition to promoting group balance at the end of the trial, it also promotes periodic balance in the sense that sequential patients are distributed equally between groups. This is particularly important because clinical trials enroll patients sequentially, such that there may be systematic differences between patients entering at different times during the study.

Unfortunately, by enforcing within-block balance, permuted block randomization is particularly susceptible to selection bias. That is, since toward the end of each block the investigators know the group with the least assignment up to that point must be assigned proportionally more of the remainder, predicting future group assignment becomes progressively easier. The remedy for this bias is to blind investigator from group assignments and from the randomization procedure itself.

Strictly speaking, permuted block randomization should be followed by statistical analysis that takes the blocking into account. However, for small block sizes this may become infeasible. In practice it is recommended that intra-block correlation be examined as a part of the statistical analysis.

A special case of permuted block randomization is random allocation, in which the entire sample is treated as one block.

Urn randomization

Covariate-adaptive randomization

When there are a number of variables that may influence the outcome of a trial (for example, patient age, gender or previous treatments) it is desirable to ensure a balance across each of these variables. This can be done with a separate list of randomization blocks for each combination of values - although this is only feasible when the number of lists is small compared to the total number of patients. When the number of variables or possible values are large a statistical method known as Minimisation can be used to minimize the imbalance within each of the factors.

Outcome-adaptive randomization

For a randomized trial in human subjects to be ethical, the investigator must believe before the trial begins that all treatments under consideration are equally desirable. At the end of the trial, one treatment may be selected as superior if a statistically significant difference was discovered. Between the beginning and end of the trial is an ethical grey zone. As patients are treated, evidence may accumulate that one treatment is superior, and yet patients are still randomized equally between all treatments until the trial ends.

Outcome-adaptive randomization is a variation on traditional randomization designed to address the ethical issue raised above. Randomization probabilities are adjusted continuously throughout the trial in response to the data. The probability of a treatment being assigned increases as the probability of that treatment being superior increases. The statistical advantages of randomization are retained, while on average more patients are assigned to superior treatments.

Allocation concealment

In practice, in taking care of individual patients, clinical investigators often find it difficult to maintain impartiality. Stories abound of investigators holding up sealed envelopes to lights or ransacking offices to determine group assignments in order to dictate the assignment of their next patient[24]. This introduces selection bias and confounders and distorts the results of the study. Breaking allocation concealment in randomized controlled trials is that much more problematic because in principle the randomization should have minimized such biases.

Some standard methods of ensuring allocation concealment include:

- Sequentially-Numbered, Opaque, Sealed Envelopes (SNOSE)

- Sequentially-numbered containers

- Pharmacy controlled

- Central randomization

Great care for allocation concealment must go into the clinical trial protocol and reported in detail in the publication. Recent studies have found that not only do most publications not report their concealment procedure, most of the publications that do not report also have unclear concealment procedures in the protocols[25][26].

Analysis

Noninferiority and equivalence randomized trials

As stated in The Declaration of Helsinki by the World Medical Association it is unethical to give any patient a placebo treatment if an existing treatment option is known to be beneficial.[27][28] Many scientists and ethicists consider that the U.S. Food and Drug Administration, by demanding placebo-controlled trials, encourages the systematic violation of the Declaration of Helsinki.[29] In addition, the use of placebo controls remains a convenient way to avoid direct comparisons with a competing drug.

The appropriate use of placebo is being revised.[11][12] When guidelines suggest a placebo is an unethical control, then an "active-control noninferiority trial" may be used.[30] To establish non-inferiority, the following three conditions should be - but frequently are not - established:[30]

- "The treatment under consideration exhibits therapeutic noninferiority to the active control."

- "The treatment would exhibit therapeutic efficacy in a placebo-controlled trial if such a trial were to be performed."

- "The treatment offers ancillary advantages in safety, tolerability, cost, or convenience."

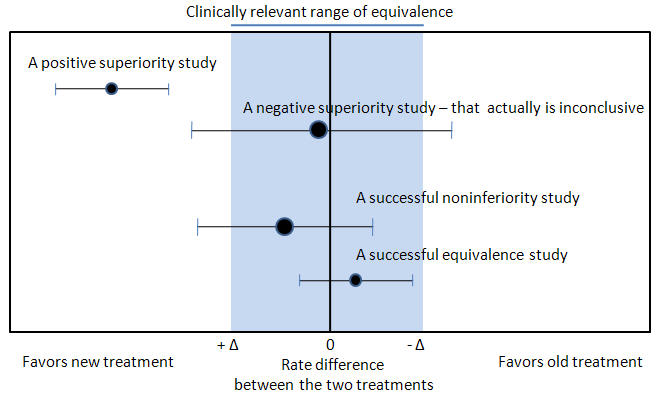

Noninferiority and equivalence randomized trial are difficult to execute well.[30] Guidelines exists for noninferiority and equivalence randomized trials.[31] In planning the trial, the investigators should choose "a noninferiority or equivalence criterion, and specifying the margin of equivalence with the rationale for its choice."[31] In reporting the outcomes, the authors should present the confidence intervals to show the reader whether the result was within their noninferiority or equivalence criterion.[31]

Difficulties

Biased trials are more common, especially in trials with subjective outcomes, if:[32]

- Inadequate or unclear random-sequence generation

- Inadequate or unclear allocation concealment

- Lack of or unclear double-blinding

A major difficulty in dealing with trial results comes from commercial, political and/or academic pressure. Most trials are expensive to run, and will be the result of significant previous research, which is itself not cheap. There may be a political issue at stake (compare MMR vaccine) or vested interests (compare homeopathy). In such cases there is great pressure to interpret results in a way which suits the viewer, and great care must be taken by researchers to maintain emphasis on clinical facts.

Regarding data analyses of randomized controlled trials, research sponsored by industry may incompletely report or analyze drug toxicity.[33][34] Similarly, industry-sponsored trials may be more likely to omit intention-to-treat analyses.[35] These problems with statistical analyses have led the Journal of the American Medical Association (JAMA) to require independent analysis of data.[36][37] This policy has been associated with a decreased in the number of trials published by JAMA.[38]

Most studies start with a 'null hypothesis' which is being tested (usually along the lines of 'Our new treatment x cures as many patients as existing treatment y') and an alternative hypothesis ('x cures more patients than y'). The analysis at the end will give a statistical likelihood, based on the facts, of whether the null hypothesis can be safely rejected (saying that the new treatment does, in fact, result in more cures). Nevertheless this is only a statistical likelihood, so false negatives and false positives are possible. These are generally set an acceptable level (e.g., 1% chance that it was a false result). However, this risk is cumulative, so if 200 trials are done (often the case for contentious matters) about 2 will show contrary results. There is a tendency for these two to be seized on by those who need that proof for their point of view.

Small study effect

Small trials report stronger effect estimates.[39]

Publication bias

Publication bias refers to the tendency that trials that show a positive significant effect are more likely to be published than those that show no effect or are inconclusive.

Selective reporting bias

A variation of publication bias is selective reporting bias, or outcome reporting bias, occurs when several outcomes within a trial are measured but these are reported selectively depending on the strength and direction of those results.[35][40]

Trial registration

At the same time, in September 2004, the International Committee of Medical Journal Editors (ICMJE) announced that all trials starting enrollment after July 1, 2005 must be registered prior to consideration for publication in one of the 12 member journals of the Committee.[41] This move was to reduce the risk of publication bias as negative trials that are unpublished would be more easily discoverable.

Available trial registries include:

- http://clinicaltrials.gov

- European Union Drug Regulating Authorities Clinical Trials Database (EudraCT) https://eudract.ema.europa.eu

- As of December, 2016 trials registered at EudraCT must have their results posted within one year of completing the trial. Just less than half of eligible trials have complied[44]

- European Union Clinical Trials Register https://www.clinicaltrialsregister.eu/ctr-search/search (contains EudraCT registrations)

- International Clinical Trial Registry Platform (ICTRP) of the World Health Organization http://www.who.int/ictrp

- International Standard Randomised Controlled Trial Number (ISRCTN) http://isrctn.org (also includes nonrandomized studies)

Compliance with registration is monitored by:

- EudraCT is monitored by https://eu.trialstracker.net/ of the EBM DataLab at the University of Oxford[44].

- clinicaltrials.gov registration is being monitored by https://fdaaa.trialstracker.net/.

Ongoing issues:

- Five years after the ICMJE announced trial registration, registration may still occur late or not at all.[45][46]

- Subgroups may not be pre-specified in trial registrations[47].

- It is not clear how effective trial registration is because many registered trials are never completely published.[48]

- The U.S. FDA is working to strengthen freporting[49].

Impact:

- Trial registration may have reduced publication bias[50] although in one study of this the results did not quite reach significance[51]. More recent assessments of trail registration refute the association.[52][53]

- One explanation is that the emphasis on trial registration was more associated with study results early, with less importance years later.

Interim analysis - stopping trials early

Trials are increasingly stopped early[54]; however, this may induce a bias that exaggerates results[55][54]. Data safety and monitoring boards that are independent of the trial are commissioned to conduct interim analyses and make decisions about stopping trials early.[56] [57]

Reasons to stop a trial early are efficacy, safety, and futility.[58][59]

Regarding efficacy, various rules exist that adjust alpha to decide when to stop a trial early.[60][61][62][63][64] A commonly recommended rules are the O'Brien-Fleming (the O'Brien-Fleming rule requires a varying p-value depending on the number of interim analyses) and the Haybittle-Peto (the Haybittle-Peto which requires p<0.001 to stop a trial early) rule.[60][61][65]

Using a more conservative stopping rule reduces the chance of a statistical alpha (Type I) error; however, these rules do not alter that the effect size may be exaggerated.[66][61] According to Bassler, "the more stringent the P-value threshold results must cross to justify stopping the trial, the more likely it is that a trial stopped early will overestimate the treatment effect."[59] A review of trials stopped early found that the earlier a trial was stopped the larger was its reported treatment effect[54], especially if the trial had less than 500 total events[67]. Accordingly, examples exist of trials whose interim analyses were significant, but the trial was continued and the final analysis was less significant or was insignificant.[68][69][70]

Methods to correct for exaggeration exists.[71][66] A Bayesian approach to interim analysis may help reduce bias and adjust the estimate of effect.[72]

As an alternative to the alpha rules, conditional power can help decide when to stop trials early.[73][74]

Data rights

Rights to and ownership of data is important[75][76].

SPIRIT guidelines for trial protocols includes[77]:

- Item 29: Statement of who will have access to the final trial dataset, and disclosure of contractual agreements that limit such access for investigators

However, most medical schools do not examine contracts between academics and industry[78].

Academics report conflict and lack of data access[79][80].

Unnecessary trials

Some trials may be unnecessary because their hypotheses have already been established.[81] Using cumulative meta-analysis, 25 of 33 randomized controlled trials of streptokinase for the treatment of acute myocardial infarction were unnecessary.[82]

Authors of trials may fail to cite prior trials.[83]

Cumulative meta-analysis prior to a new trial may indicate trials that do not need to be executed.[84]

Seeding trials

Seeding trials are studies sponsored by industry whose "apparent purpose is to test a hypothesis. The true purpose is to get physicians in the habit of prescribing a new drug."[85] Examples include the ADVANTAGE[85]and STEPS[86] trials.

Conflict of interest=

Trials sponsored by industry are more likely to report positive results[87] and this cannot be explained by 'Risk of bias' assessment.

Missing data

Missing data

Several approaches to handling missing data have been reviewed.[88][89] Regarding assigning an outcome to the patient, using a 'last observation carried forward' (LOCF) analysis may introduce biases.[90]

Presentation of results

Results may be presented with misleading "spin".[91]

References

- ↑ Revicki DA, Frank L (1999). "Pharmacoeconomic evaluation in the real world. Effectiveness versus efficacy studies". Pharmacoeconomics. 15 (5): 423–34. doi:10.2165/00019053-199915050-00001. PMID 10537960.

- ↑ Singal AG, Higgins PD, Waljee AK (2014). "A primer on effectiveness and efficacy trials". Clin Transl Gastroenterol. 5: e45. doi:10.1038/ctg.2013.13. PMC 3912314. PMID 24384867.

- ↑ Lillie EO, Patay B, Diamant J, Issell B, Topol EJ, Schork NJ (2011). "The n-of-1 clinical trial: the ultimate strategy for individualizing medicine?". Per Med. 8 (2): 161–173. doi:10.2217/pme.11.7. PMC 3118090. PMID 21695041.

- ↑ Wood FA, Howard JP, Finegold JA, Nowbar AN, Thompson DM, Arnold AD; et al. (2020). "N-of-1 Trial of a Statin, Placebo, or No Treatment to Assess Side Effects". N Engl J Med. 383 (22): 2182–2184. doi:10.1056/NEJMc2031173. PMID 33196154 Check

|pmid=value (help). - ↑ Porcino AJ, Shamseer L, Chan AW, Kravitz RL, Orkin A, Punja S; et al. (2020). "SPIRIT extension and elaboration for n-of-1 trials: SPENT 2019 checklist". BMJ. 368: m122. doi:10.1136/bmj.m122. PMID 32107202 Check

|pmid=value (help). - ↑ Nikles J, Onghena P, Vlaeyen JWS, Wicksell RK, Simons LE, McGree JM; et al. (2021). "Establishment of an International Collaborative Network for N-of-1 Trials and Single-Case Designs". Contemp Clin Trials Commun. 23: 100826. doi:10.1016/j.conctc.2021.100826. PMC 8350373 Check

|pmc=value (help). PMID 34401597 Check|pmid=value (help). - ↑ Waber, Rebecca L., Baba Shiv, Ziv Carmon, and Dan Ariely. 2008. Commercial Features of Placebo and Therapeutic Efficacy. JAMA 299, no. 9:1016-1017.

- ↑ Sacks H, Chalmers TC, Smith H (1982). "Randomized versus historical controls for clinical trials". Am. J. Med. 72 (2): 233–40. PMID 7058834.

- ↑ Rothman KJ, Michels KB (1994). "The continuing unethical use of placebo controls". N. Engl. J. Med. 331 (6): 394–8. PMID 8028622.

- ↑ Djulbegovic B, Lacevic M, Cantor A; et al. (2000). "The uncertainty principle and industry-sponsored research". Lancet. 356 (9230): 635–8. PMID 10968436.

- ↑ 11.0 11.1 Temple R, Ellenberg SS (2000). "Placebo-controlled trials and active-control trials in the evaluation of new treatments. Part 1: ethical and scientific issues". Ann Intern Med. 133: 455–63. PMID 10975964.

- ↑ 12.0 12.1 Ellenberg SS, Temple R (2000). "Placebo-controlled trials and active-control trials in the evaluation of new treatments. Part 2: practical issues and specific cases". Ann Intern Med. 133: 464–70. PMID 10975965.

- ↑ Emanuel EJ, Miller FG (2001). "The ethics of placebo-controlled trials--a middle ground". N. Engl. J. Med. 345 (12): 915–9. PMID 11565527.

- ↑ Cobb LA, Thomas GI, Dillard DH, Merendino KA, Bruce RA (1959). "An evaluation of internal-mammary-artery ligation by a double-blind technic". N. Engl. J. Med. 260 (22): 1115–8. PMID 13657350.

- ↑ Dimond EG, Kittle CF, Crockett JE (1960). "Comparison of internal mammary artery ligation and sham operation for angina pectoris". Am. J. Cardiol. 5: 483–6. PMID 13816818.

- ↑ Moseley JB, O'Malley K, Petersen NJ; et al. (2002). "A controlled trial of arthroscopic surgery for osteoarthritis of the knee". N. Engl. J. Med. 347 (2): 81–8. doi:10.1056/NEJMoa013259. PMID 12110735.

- ↑ Tenery R, Rakatansky H, Riddick FA; et al. (2002). "Surgical "placebo" controls". Ann. Surg. 235 (2): 303–7. PMID 11807373.

- ↑ Morris AH, Wallace CJ, Menlove RL, Clemmer TP, Orme JF, Weaver LK; et al. (1994). "Randomized clinical trial of pressure-controlled inverse ratio ventilation and extracorporeal CO2 removal for adult respiratory distress syndrome". Am J Respir Crit Care Med. 149 (2 Pt 1): 295–305. doi:10.1164/ajrccm.149.2.8306022. PMID 8306022.

- ↑ East TD, Heermann LK, Bradshaw RL, Lugo A, Sailors RM, Ershler L; et al. (1999). "Efficacy of computerized decision support for mechanical ventilation: results of a prospective multi-center randomized trial". Proc AMIA Symp: 251–5. PMC 2232746. PMID 10566359.

- ↑ Schuetz P, Christ-Crain M, Thomann R, Falconnier C, Wolbers M, Widmer I; et al. (2009). "Effect of procalcitonin-based guidelines vs standard guidelines on antibiotic use in lower respiratory tract infections: the ProHOSP randomized controlled trial". JAMA. 302 (10): 1059–66. doi:10.1001/jama.2009.1297. PMID 19738090.

- ↑ Mandell LA, Wunderink RG, Anzueto A, Bartlett JG, Campbell GD, Dean NC; et al. (2007). "Infectious Diseases Society of America/American Thoracic Society consensus guidelines on the management of community-acquired pneumonia in adults". Clin Infect Dis. 44 Suppl 2 (Suppl 2): S27–72. doi:10.1086/511159. PMC 7107997 Check

|pmc=value (help). PMID 17278083. - ↑ Huang DT, Yealy DM, Filbin MR, Brown AM, Chang CH, Doi Y; et al. (2018). "Procalcitonin-Guided Use of Antibiotics for Lower Respiratory Tract Infection". N Engl J Med. 379 (3): 236–249. doi:10.1056/NEJMoa1802670. PMC 6197800. PMID 29781385. Review in: Ann Intern Med. 2018 Oct 16;169(8):JC39

- ↑ Lachin JM, Matts JP, Wei LJ (1988). "Randomization in Clinical Trials: Conclusions and Recommendations". Controlled Clinical Trials. 9 (4): 365–74. PMID 3203526.

- ↑ Schulz KF, Grimes DA (2002). "Allocation concealment in randomised trials: defending against deciphering". Lancet. 359: 614–8. PMID 11867132.

- ↑ Pildal J, Chan AW; et al. (2005). "Comparison of descriptions of allocation concealment in trial protocols and the publihed report: cohort study". BMJ. 330: 1049. PMID 15817527.

- ↑ Allocation concealment and blinding: when ignorance is bliss

- ↑ World Medical Association. "Declaration of Helsinki: Ethical Principles for Medical Research Involving Human Subjects". Retrieved 2007-11-17.

- ↑ "World Medical Association declaration of Helsinki. Recommendations guiding physicians in biomedical research involving human subjects". JAMA. 277: 925–6. 1997. PMID 9062334.

- ↑ Michels KB, Rothman KJ (2003). "Update on unethical use of placebos in randomised trials". Bioethics. 17: 188–204. PMID 12812185.

- ↑ 30.0 30.1 30.2 Kaul S, Diamond GA (2006). "Good enough: a primer on the analysis and interpretation of noninferiority trials". Ann Intern Med. 145: 62–9. PMID 16818930.

- ↑ 31.0 31.1 31.2 Piaggio G, Elbourne DR, Altman DG, Pocock SJ, Evans SJ (2006). "Reporting of noninferiority and equivalence randomized trials: an extension of the CONSORT statement". JAMA. 295 (10): 1152–60. doi:10.1001/jama.295.10.1152. PMID 16522836.

- ↑ Savović J, Jones HE, Altman DG, Harris RJ, Jüni P, Pildal J; et al. (2012). "Influence of Reported Study Design Characteristics on Intervention Effect Estimates From Randomized, Controlled Trials". Ann Intern Med. doi:10.7326/0003-4819-157-6-201209180-00537. PMID 22945832.

- ↑ Psaty BM, Kronmal RA (2008). "Reporting mortality findings in trials of rofecoxib for Alzheimer disease or cognitive impairment: a case study based on documents from rofecoxib litigation". JAMA. 299 (15): 1813–7. doi:10.1001/jama.299.15.1813. PMID 18413875.

- ↑ Madigan D, Sigelman DW, Mayer JW, Furberg CD, Avorn J (2012). "Under-reporting of cardiovascular events in the rofecoxib Alzheimer disease studies". Am Heart J. 164 (2): 186–93. doi:10.1016/j.ahj.2012.05.002. PMID 22877803.

- ↑ 35.0 35.1 Melander H; et al. (2003). "Evidence b(i)ased medicine--selective reporting from studies sponsored by pharmaceutical industry: review of studies in new drug applications". BMJ. 326: 1171–3. doi:10.1136/bmj.326.7400.1171. PMID 12775615.

- ↑ DeAngelis CD, Fontanarosa PB (2010). "Ensuring integrity in industry-sponsored research: primum non nocere, revisited". JAMA. 303 (12): 1196–8. doi:10.1001/jama.2010.337. PMID 20332409.

- ↑ Fontanarosa PB, Flanagin A, DeAngelis CD (2005). "Reporting conflicts of interest, financial aspects of research, and role of sponsors in funded studies". JAMA. 294 (1): 110–1. doi:10.1001/jama.294.1.110. PMID 15998899.

- ↑ Wager E, Mhaskar R, Warburton S, Djulbegovic B (2010). "JAMA published fewer industry-funded studies after introducing a requirement for independent statistical analysis". PLoS One. 5 (10): e13591. doi:10.1371/journal.pone.0013591. PMC 2962640. PMID 21042585.

- ↑ Dechartres A, Trinquart L, Boutron I, Ravaud P (2013). "Influence of trial sample size on treatment effect estimates: meta-epidemiological study". BMJ. 346: f2304. doi:10.1136/bmj.f2304. PMC 3634626. PMID 23616031.

- ↑ Chang L, Dhruva SS, Chu J, Bero LA, Redberg RF. [Selective reporting in trials of high risk cardiovascular devices: cross sectional comparison between premarket approval summaries and published reports]. BMJ. 2015 Jun 10;350:h2613. doi:10.1136/bmj.h2613

- ↑ De Angelis C, Drazen JM, Frizelle FA; et al. (2004). "Clinical trial registration: a statement from the International Committee of Medical Journal Editors". The New England journal of medicine. 351 (12): 1250–1. doi:10.1056/NEJMe048225. PMID 15356289.

- ↑ Food and Drug Administration Amendments Act of 2007. https://www.gpo.gov/fdsys/pkg/PLAW-110publ85/pdf/PLAW-110publ85.pdf

- ↑ DeVito NJ, Bacon S, Goldacre B (2020). "Compliance with legal requirement to report clinical trial results on ClinicalTrials.gov: a cohort study". Lancet. 395 (10221): 361–369. doi:10.1016/S0140-6736(19)33220-9. PMID 31958402.

- ↑ 44.0 44.1 Goldacre B, DeVito NJ, Heneghan C, Irving F, Bacon S, Fleminger J; et al. (2018). "Compliance with requirement to report results on the EU Clinical Trials Register: cohort study and web resource". BMJ. 362: k3218. doi:10.1136/bmj.k3218. PMC 6134801. PMID 30209058.

- ↑ Law MR, Kawasumi Y, Morgan SG (2011). "Despite law, fewer than one in eight completed studies of drugs and biologics are reported on time on ClinicalTrials.gov". Health Aff (Millwood). 30 (12): 2338–45. doi:10.1377/hlthaff.2011.0172. PMID 22147862.

- ↑ Mathieu S, Boutron I, Moher D, Altman DG, Ravaud P (2009). "Comparison of registered and published primary outcomes in randomized controlled trials". JAMA. 302 (9): 977–84. doi:10.1001/jama.2009.1242. PMID 19724045.

- ↑ Kasenda B, Schandelmaier S, Sun X, von Elm E, You J, Blümle A; et al. (2014). "Subgroup analyses in randomised controlled trials: cohort study on trial protocols and journal publications". BMJ. 349: g4539. doi:10.1136/bmj.g4539. PMC 4100616. PMID 25030633.

- ↑ Ross JS, Mulvey GK, Hines EM, Nissen SE, Krumholz HM (2009). "Trial publication after registration in ClinicalTrials.Gov: a cross-sectional analysis". PLoS Med. 6 (9): e1000144. doi:10.1371/journal.pmed.1000144. PMC 2728480. PMID 19901971.

- ↑ Ramachandran R, Morten CJ, Ross JS (2021). "Strengthening the FDA's Enforcement of ClinicalTrials.gov Reporting Requirements". JAMA. doi:10.1001/jama.2021.19773. PMID 34766971 Check

|pmid=value (help). - ↑ Emdin C, Odutayo A, Hsiao A, Shakir M, Hopewell S, Rahimi K; et al. (2015). "Association of cardiovascular trial registration with positive study findings: Epidemiological Study of Randomized Trials (ESORT)". JAMA Intern Med. 175 (2): 304–7. doi:10.1001/jamainternmed.2014.6924. PMID 25545611.

- ↑ Dechartres A, Ravaud P, Atal I, Riveros C, Boutron I (2016). "Association between trial registration and treatment effect estimates: a meta-epidemiological study". BMC Med. 14 (1): 100. doi:10.1186/s12916-016-0639-x. PMC 4932748. PMID 27377062.

- ↑ Odutayo A, Emdin CA, Hsiao AJ, Shakir M, Copsey B, Dutton S; et al. (2017). "Association between trial registration and positive study findings: cross sectional study (Epidemiological Study of Randomized Trials-ESORT)". BMJ. 356: j917. doi:10.1136/bmj.j917. PMID 28292744.

- ↑ Rasmussen N, Lee K, Bero L (2009). "Association of trial registration with the results and conclusions of published trials of new oncology drugs". Trials. 10: 116. doi:10.1186/1745-6215-10-116. PMC 2811705. PMID 20015404.

- ↑ 54.0 54.1 54.2 Montori VM, Devereaux PJ, Adhikari NK; et al. "Randomized trials stopped early for benefit: a systematic review". JAMA. 294 (17): 2203–9. doi:10.1001/jama.294.17.2203. PMID 16264162. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ Bassler D, Briel M, Montori VM, Lane M, Glasziou P, Zhou Q; et al. "Stopping randomized trials early for benefit and estimation of treatment effects: systematic review and meta-regression analysis". JAMA. 303 (12): 1180–7. doi:10.1001/jama.2010.310. PMID 20332404. Unknown parameter

|=ignored (help) - ↑ Trotta, F., G. Apolone, S. Garattini, and G. Tafuri. 2008. Stopping a trial early in oncology: for patients or for industry? Ann Oncol mdn042.http://dx.doi.org/10.1093/annonc/mdn042

- ↑ Slutsky AS, Lavery JV. "Data Safety and Monitoring Boards". N. Engl. J. Med. 350 (11): 1143–7. doi:10.1056/NEJMsb033476. PMID 15014189. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ Borer JS, Gordon DJ, Geller NL. "When should data and safety monitoring committees share interim results in cardiovascular trials?". JAMA. 299 (14): 1710–2. doi:10.1001/jama.299.14.1710. PMID 18398083. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ 59.0 59.1 Bassler D, Montori VM, Briel M, Glasziou P, Guyatt G. "Early stopping of randomized clinical trials for overt efficacy is problematic". J Clin Epidemiol. 61 (3): 241–6. doi:10.1016/j.jclinepi.2007.07.016. PMID 18226746. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ 60.0 60.1 Pocock SJ. "When (not) to stop a clinical trial for benefit". JAMA. 294 (17): 2228–30. doi:10.1001/jama.294.17.2228. PMID 16264167. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ 61.0 61.1 61.2 Schulz KF, Grimes DA. "Multiplicity in randomised trials II: subgroup and interim analyses". Lancet. 365 (9471): 1657–61. doi:10.1016/S0140-6736(05)66516-6. PMID 15885299. Unknown parameter

|=ignored (help) - ↑ Grant A. "Stopping clinical trials early". BMJ. 329 (7465): 525–6. doi:10.1136/bmj.329.7465.525. PMID 15345605. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ O'Brien PC, Fleming TR. "A multiple testing procedure for clinical trials". Biometrics. 35 (3): 549–56. PMID 497341. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) - ↑ Bauer P, Köhne K. "Evaluation of experiments with adaptive interim analyses". Biometrics. 50 (4): 1029–41. PMID 7786985. Unknown parameter

|=ignored (help); Unknown parameter|month=ignored (help) This method was used by PMID: 18184958 - ↑ DeMets, David L.; Susan S. Ellenberg; Fleming, Thomas J. Data Monitoring Committees in Clinical Trials: a Practical Perspective. New York: J. Wiley & Sons. ISBN 0-471-48986-7. Unknown parameter

|=ignored (help) - ↑ 66.0 66.1 Pocock SJ, Hughes MD (1989). "Practical problems in interim analyses, with particular regard to estimation". Control Clin Trials. 10 (4 Suppl): 209S–221S. PMID 2605969. Unknown parameter

|month=ignored (help) - ↑ Bassler, Dirk (2010-03-24). "Stopping Randomized Trials Early for Benefit and Estimation of Treatment Effects: Systematic Review and Meta-regression Analysis". JAMA. 303 (12): 1180–1187. doi:10.1001/jama.2010.310. Retrieved 2010-03-25. Unknown parameter

|coauthors=ignored (help) - ↑ Pocock S, Wang D, Wilhelmsen L, Hennekens CH (2005). "The data monitoring experience in the Candesartan in Heart Failure Assessment of Reduction in Mortality and morbidity (CHARM) program". Am. Heart J. 149 (5): 939–43. doi:10.1016/j.ahj.2004.10.038. PMID 15894981. Unknown parameter

|month=ignored (help) - ↑ Wheatley K, Clayton D (2003). "Be skeptical about unexpected large apparent treatment effects: the case of an MRC AML12 randomization". Control Clin Trials. 24 (1): 66–70. PMID 12559643. Unknown parameter

|month=ignored (help) - ↑ Abraham E, Reinhart K, Opal S; et al. (2003). "Efficacy and safety of tifacogin (recombinant tissue factor pathway inhibitor) in severe sepsis: a randomized controlled trial". JAMA. 290 (2): 238–47. doi:10.1001/jama.290.2.238. PMID 12851279. Unknown parameter

|month=ignored (help) - ↑ Hughes MD, Pocock SJ (1988). "Stopping rules and estimation problems in clinical trials". Stat Med. 7 (12): 1231–42. PMID 3231947. Unknown parameter

|month=ignored (help) - ↑ Goodman SN (2007). "Stopping at nothing? Some dilemmas of data monitoring in clinical trials". Ann. Intern. Med. 146 (12): 882–7. PMID 17577008. Unknown parameter

|month=ignored (help) - ↑ Statistical Monitoring of Clinical Trials: Fundamentals for Investigators. Berlin: Springer. 2005. ISBN 0-387-27781-1.

- ↑ Lachin JM (2006). "Operating characteristics of sample size re-estimation with futility stopping based on conditional power". Stat Med. 25 (19): 3348–65. doi:10.1002/sim.2455. PMID 16345019. Unknown parameter

|month=ignored (help) - ↑ Naudet F, Sakarovitch C, Janiaud P, Cristea I, Fanelli D, Moher D; et al. (2018). "Data sharing and reanalysis of randomized controlled trials in leading biomedical journals with a full data sharing policy: survey of studies published in The BMJ and PLOS Medicine". BMJ. 360: k400. doi:10.1136/bmj.k400. PMC 5809812. PMID 29440066.

- ↑ Mello MM, Clarridge BR, Studdert DM (2005). "Academic medical centers' standards for clinical-trial agreements with industry". N Engl J Med. 352 (21): 2202–10. doi:10.1056/NEJMsa044115. PMID 15917385.

- ↑ Chan AW, Tetzlaff JM, Gøtzsche PC, Altman DG, Mann H, Berlin JA; et al. (2013). "SPIRIT 2013 explanation and elaboration: guidance for protocols of clinical trials". BMJ. 346: e7586. doi:10.1136/bmj.e7586. PMC 3541470. PMID 23303884.

- ↑ Mello MM, Murtagh L, Joffe S, Taylor PL, Greenberg Y, Campbell EG (2018). "Beyond financial conflicts of interest: Institutional oversight of faculty consulting agreements at schools of medicine and public health". PLoS One. 13 (10): e0203179. doi:10.1371/journal.pone.0203179. PMC 6205599. PMID 30372431.

- ↑ Rasmussen K, Bero L, Redberg R, Gøtzsche PC, Lundh A (2018). "Collaboration between academics and industry in clinical trials: cross sectional study of publications and survey of lead academic authors". BMJ. 363: k3654. doi:10.1136/bmj.k3654. PMC 6169401. PMID 30282703.

- ↑ Kasenda B, von Elm E, You JJ, Blümle A, Tomonaga Y, Saccilotto R; et al. (2016). "Agreements between Industry and Academia on Publication Rights: A Retrospective Study of Protocols and Publications of Randomized Clinical Trials". PLoS Med. 13 (6): e1002046. doi:10.1371/journal.pmed.1002046. PMC 4924795. PMID 27352244.

- ↑ Baum ML, Anish DS, Chalmers TC, Sacks HS, Smith H, Fagerstrom RM (1981). "A survey of clinical trials of antibiotic prophylaxis in colon surgery: evidence against further use of no-treatment controls". N Engl J Med. 305 (14): 795–9. doi:10.1056/NEJM198110013051404. PMID 7266633.

- ↑ Lau J, Antman EM, Jimenez-Silva J, Kupelnick B, Mosteller F, Chalmers TC (1992). "Cumulative meta-analysis of therapeutic trials for myocardial infarction". N Engl J Med. 327 (4): 248–54. doi:10.1056/NEJM199207233270406. PMID 1614465.

- ↑ Robinson KA, Goodman SN (2011). "A systematic examination of the citation of prior research in reports of randomized, controlled trials". Ann Intern Med. 154 (1): 50–5. doi:10.1059/0003-4819-154-1-201101040-00007. PMID 21200038.

- ↑ Lau J, Schmid CH, Chalmers TC (1995). "Cumulative meta-analysis of clinical trials builds evidence for exemplary medical care". J Clin Epidemiol. 48 (1): 45–57, discussion 59-60. PMID 7853047.

- ↑ 85.0 85.1 Hill KP, Ross JS, Egilman DS, Krumholz HM (2008). "The ADVANTAGE seeding trial: a review of internal documents". Ann. Intern. Med. 149 (4): 251–8. PMID 18711155. Unknown parameter

|month=ignored (help) - ↑ Krumholz SD, Egilman DS, Ross JS (2011). "Study of neurontin: titrate to effect, profile of safety (STEPS) trial: a narrative account of a gabapentin seeding trial". Arch Intern Med. 171 (12): 1100–7. doi:10.1001/archinternmed.2011.241. PMID 21709111.

- ↑ Lundh A, Lexchin J, Mintzes B, Schroll JB, Bero L (2017). "Industry sponsorship and research outcome". Cochrane Database Syst Rev. 2: MR000033. doi:10.1002/14651858.MR000033.pub3. PMC 8132492 Check

|pmc=value (help). PMID 28207928. - ↑ Little RJ, D'Agostino R, Cohen ML, Dickersin K, Emerson SS, Farrar JT; et al. (2012). "The prevention and treatment of missing data in clinical trials". N Engl J Med. 367 (14): 1355–60. doi:10.1056/NEJMsr1203730. PMC 3771340. PMID 23034025.

- ↑ Fleming TR (2011). "Addressing missing data in clinical trials". Ann Intern Med. 154 (2): 113–7. doi:10.1059/0003-4819-154-2-201101180-00010. PMID 21242367.

- ↑ Hauser WA, Rich SS, Annegers JF, Anderson VE (1990). "Seizure recurrence after a 1st unprovoked seizure: an extended follow-up". Neurology. 40 (8): 1163–70. PMID 2381523.

- ↑ Yavchitz A, Boutron I, Bafeta A, Marroun I, Charles P, Mantz J; et al. (2012). "Misrepresentation of randomized controlled trials in press releases and news coverage: a cohort study". PLoS Med. 9 (9): e1001308. doi:10.1371/journal.pmed.1001308. PMC 3439420. PMID 22984354.

See also

- Drug development

- Double-blind

- Evidence-based medicine

- Hypothesis testing

- Intention to treat analysis

- Medicine

- Meta-analysis

- Randomization

- Statistical inference

- Systematic review

External links

- A humorous look at problems with requiring randomized studies in medicine

- Directory of randomization software and services

- Power and bias in adaptively randomized clinical trials

- Design and analysis of randomized controlled trials using simulations

- Adaptive randomization software

- Lessons Learned from a Randomized Study of Multisystemic Therapy in Canada

Template:Medical research studies

de:Randomisierte, kontrollierte Studie id:Randomized controlled trial lt:Eksperimentiniai tyrimai nl:Gerandomiseerd onderzoek met controlegroep